Behind the Scenes of the Viral Pomelli Photoshoot Launch

A Backstage Perspective of launching Pomelli’s newest feature with the PM and Engineer who helped build it.

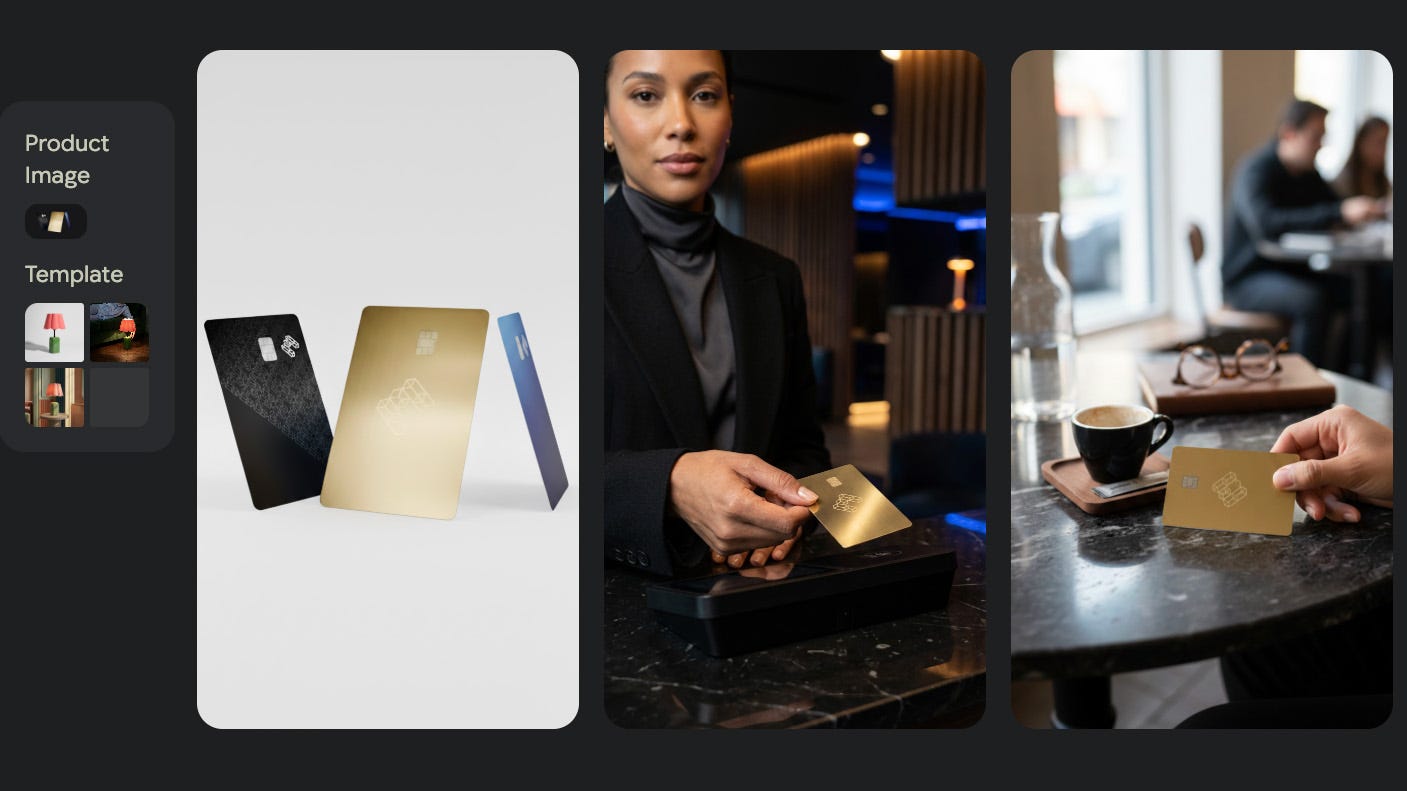

Last week, we launched a new “Photoshoot” feature for Pomelli.

The idea was simple: give small businesses the ability to generate high-quality, on-brand product photography without a physical studio. We knew it was useful, but we didn’t expect it to go viral.

The announcement video racked up over 23 million views on X, not to mention over 50k likes and bookmarks!

When something resonates that widely, it’s usually because a thousand tiny decisions went right. But those decisions are often messy, debated, and made right down to the wire.

So for this week’s post, I wanted to do something different. Instead of just sharing my thoughts on AI theory, I sat down with Daniel (Product Manager) and Nir (Engineer) - two folks from the team who actually built this feature - to talk about the reality of shipping generative AI products.

Here are the raw takeaways from the front lines.

1. The Quality vs. Timeline Trade-off

One of the hardest parts of building with GenAI is deciding when something is “good enough.”

Quality in this space will never be 100% perfect. And right now, that is okay.

We had rigorous debates about quality literally right up until the night before launch. It wasn’t about hitting a hard metric; it was a constant calibration. Every slight improvement in quality often comes at the cost of latency, cost, or pushing out the timeline.

Daniel put it perfectly: “It’s subjective and you need to have an opinion.”

You can’t just follow a checklist. You have to make the call. We decided to launch knowing the output wouldn’t be flawless 100% of the time, but we felt confident for a few key reasons:

Optionality: We generate multiple options. Even if one misses the mark, the user can pick the best one.

Agency: Users can always generate more options or edit the output to tweak it. They aren’t stuck with a single bad result.

The “Worst It Will Ever Be” Rule: We know this is the worst these models will ever be. We are betting on the curve.

This is also where being at Google is a superpower. When we notice specific quality gaps - like small text rendering or skin texture - we don’t just work around them; we can give that feedback directly to the model teams. We are building the feedback loop that improves the foundation for everyone.

The Lesson: Quality isn’t a static bar; it’s a dynamic trade-off. Don’t let the pursuit of 100% perfection stop you from shipping a tool that is 90% magic today. And the results we’ve been seeing with Pomelli Photoshoot truly feel like magic (like this coloring book which I then turned into an animaged creative - all in Pomelli):

2. The “Loss Buckets” Framework

Quality in AI is subjective. How do you know when a model is “good enough” to launch?

Nir introduced a concept I love: Loss Buckets.

Instead of trying to fix everything at once, the team categorizes failures into specific buckets. For Pomelli, this included things like:

Small Text Issues: The model struggles with tiny font rendering.

Skin Tone/Texture: Does the skin look plastic or real?

Physics/Interaction: A hand holding something, or a phone screen.

Speaking of physics, we definitely saw a few of these come up after launch. Like this one user who got an image of a person who had a few too many hands, or someone who was shown holding a phone…backwards. These are a perfect example of image generations that fell within a “loss bucket”, and the team is now actively rolling out more improvements post-launch. Quality is a hill you continue to climb after day one.

The Insight: Not all buckets are created equal.

As Nir explained, you have to ruthlessly triage. One bucket might be trivial to fix with a few prompt tweaks. Another might be a fundamental limitation of the model architecture that you could spend weeks fighting without making a dent.

The Lesson: Don’t burn cycles trying to fix something that might be a model limitation today; fix the product experience around it. Identify which buckets you can actually move the needle on, and accept that some buckets will only be solved by the next generation of the model.

3. The 4-Iteration Rule

When iterating on prompts to fix those loss buckets, there is a point where you have to stop.

As Nir pointed out, you can often get 80% of the way there with just a handful of iterations. But after that initial burst of progress, you hit a wall. If you find yourself endlessly tweaking a prompt and only seeing marginal gains, you are no longer solving a prompt problem; you are fighting the model architecture itself.

The Lesson: Learn to recognize the plateau. If you can’t fix a prompt issue quickly, stop. Either accept the limitation, change the feature, or wait for the next model. Don’t fall into the trap of infinite tweaking for minimal gain.

4. The Surprise: We All Became Photographers

One of the most unexpected outcomes of building this feature wasn’t technical - it was cultural.

Daniel: “I think a big thing has been how much our whole team now knows about photoshoots. I feel like we’re all experts at this point. We know shot types, we know poses, we know lighting.”

To build a tool for photographers, the engineers and PMs had to become photographers. They weren’t just coding; they were debating the merits of “rembrandt lighting” vs. “butterfly lighting.”

The Lesson: You can’t vibe code taste. To build a generative tool that outputs quality, the builders need to develop domain expertise in the output itself. You have to know what “good” looks like to tell the model how to make it.

The Launch Reality

We made decisions about this product literally the day before launch. We swapped templates, adjusted routing logic, and made trade-offs on quality vs. speed right up until the button was pushed.

That is the reality of building in this space. The models are changing, the capabilities are changing, and the “best practice” is whatever worked yesterday.

It’s messy, it’s fast, and when it works - like it did last week - it’s incredibly fun.

(A huge thank you to Daniel and Nir for letting me drag them into a conference room to have this conversation.)