I Made AI Bingo Cards for Two Years. Then I Stopped.

What I learned - slowly - about the difference between predicting the future and navigating it.

January 1st used to mean a bingo card.

I started the tradition as a way to think out loud about what the year in AI might bring - a grid of bets, some obvious, some a little out there. I’d post it, people would follow along, we’d check squares off together. Some hits, some misses, plenty of gray zones. It was a genuinely fun exercise.

But I’ve started thinking of those cards differently. They were less predictions than they were bets - and there’s a meaningful difference. A prediction has a binary outcome. It happened or it didn’t. A bet carries a different energy: you’re putting something down, watching how it plays out, and staying close enough to the action to update as new information arrives. The bingo cards were actually pretty good at that. What they weren’t great at was forcing me to ask: what am I going to do about it?

At the start of this year, I scrapped the card entirely. Instead I wrote out five guiding principles for how I was going to navigate what’s coming - not score it from the sidelines. It felt like a small shift when I made it. It wasn’t.

That shift - from prediction to navigation - is the foundation of everything in The Builder’s Compass, and it’s what today’s post is about.

Below is the Prologue: the story of how I read an article on that ridge that made me feel like something big was coming - a walk I’ve since taken with some of the most interesting founders and builders I know - what the years of bingo cards taught me about the limits of watching versus building, how I found myself setting up my own autonomous agent on a dedicated Mac Mini and debugging it with AI when it inevitably broke, and why the writer of that original article now wanders the halls of Google Labs.

If this is your first time here, welcome. This book is being written in public, one chapter at a time, right here on this Substack. The Prologue is where it starts.

THE BUILDER’S COMPASS

A Practitioner’s Playbook for Building 0-to-1 AI Products

Author: Jaclyn Konzelmann, Director of Product Management, Google Labs

PROLOGUE: Why I Don’t Make Predictions

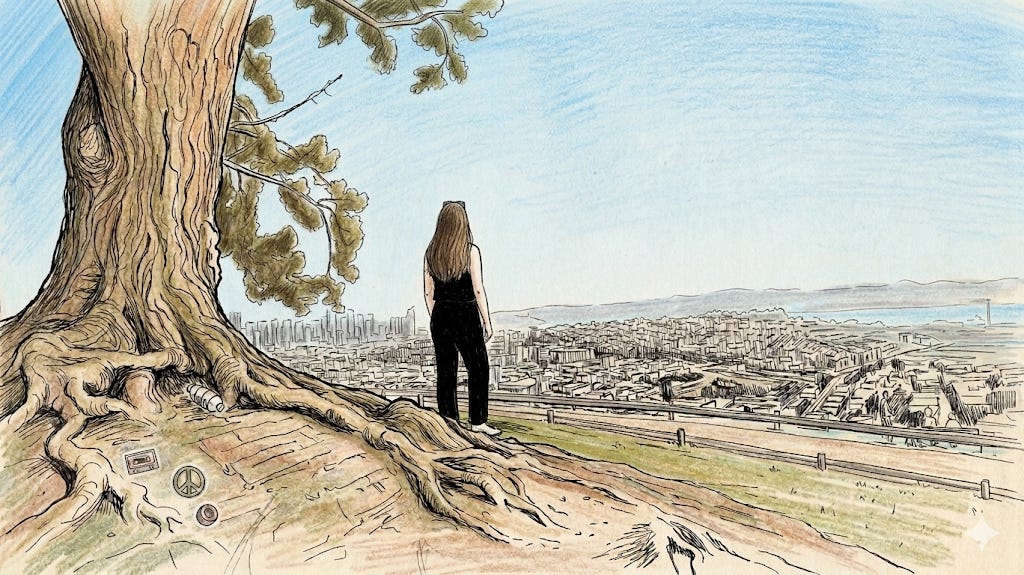

In the spring of 2022, I was walking the ridge at the top of Bernal Heights - we had just moved to the neighborhood - reading an article on my phone that I couldn’t put down.

It was a long feature in the New York Times Magazine. The title was “A.I. Is Mastering Language. Should We Trust What It Says?” It was about large language models - GPT-3, specifically. ChatGPT didn’t exist yet. Most people hadn’t heard the term “large language model” used outside a research context. But this piece had gone deep: into the labs, into the actual capabilities, into what the technology could and couldn’t do at that moment.

I looked up from my phone at the city spread out below me.

I didn’t know the exact shape of what was coming. I didn’t know the timeline, the companies that would win, or which applications would turn out to matter. But I could feel something - that particular clarity you get when you recognize the shape of a wave while it’s still far out. You don’t know exactly when it’ll arrive. You know you want to be in the water before it does.

What made that feeling credible wasn’t that I was some visionary. It was that I already knew something about building with models that don’t behave the way deterministic software does. At the time, I was on the Google Assistant speech team. I had spent years shipping features on top of non-deterministic models - continued conversation and quick phrases on the speech side, and then face match and quick gestures as I expanded into camera-based ML features. Different modalities, same fundamental challenge: building products on top of models that don’t behave the way deterministic software does.

I knew what it felt like when a model was “good enough” to build on. I knew how to design around the gap between what a model could do in a demo and what it could do reliably at scale. I knew how to think about failure modes, acceptable error rates, and what it meant to launch something that would sometimes be wrong.

When I read that article and felt what I felt, I had a frame for it. Something big was brewing - and I had some of the tools to actually work with it.

I moved to Google Labs not long after. To build products on large language models, specifically because I’d felt that thing on the ridge and didn’t want to watch from the shore.

That ridge has seen a lot of conversations since. Some of the most interesting people I know - founders, builders, the kind of people who’ve made big bets and lived to tell about it - have stood up there with me and looked out at the same view. The city looks the same. What we’re building keeps changing.

Here’s the detail that still catches me off guard: the journalist who wrote that article now works in Google Labs. I see him wandering the halls from time to time. The person whose piece made me feel like the ground was shifting is now, in his own way, helping shape what comes next. The world is small. The arc is long.

The Bingo Card Years

For the next couple of years, I channeled that feeling into something fun: annual AI bingo cards. A grid of bets on what the year would bring - some obvious, some a little out there - published publicly, tracked together with readers. Some landed. A lot landed sideways. A few missed entirely. I did mid-year reviews, engaged with readers about the ones in gray zones. People enjoyed it. I enjoyed it.

I want to be clear: I don’t think making predictions is wrong. Plenty of people I deeply respect - researchers, investors, builders - do it rigorously and it sharpens their thinking. What I’ve come to believe is that “prediction” frames the exercise as binary: it happened or it didn’t. What I was actually doing was something more like betting - putting a stake in the ground, watching how things developed, staying close enough to update when the picture changed. That’s a different and more useful orientation.

I’d like to tell you I immediately understood that prediction wasn’t the point. I didn’t.

What the bingo cards weren’t forcing me to do was ask the next question: given my current read on where things are going, what am I going to do about it?

That’s the question I started asking instead. At the start of this year, I scrapped the card and wrote out five guiding principles for how I was going to navigate what’s coming. Not score it. Navigate it.

The shift felt small. It wasn’t. And that shift - from prediction to navigation - is the foundation of everything in this book.

What the Moments Actually Feel Like

A few moments in modern history have genuinely changed what it means to build. Not incrementally. Structurally. The PC. The internet. Mobile. ChatGPT.

Each one didn’t just change the tools. It changed who could build, and what building even meant.

I’ve been professionally close to a few of these transitions. And I’ve watched the same pattern play out each time: the people who navigate the shift well aren’t the ones who predicted it most accurately. They’re the ones who were already in motion when it arrived. They’d built up the instincts, the working knowledge, the relationships - through continuous building, not continuous analyzing - so that when clarity came (even partial clarity, even provisional clarity), they could move.

We’re in one of those structural moments now. The whole field has been heading here for a while. Andrej Karpathy has been pointing at it - his post on agency versus intelligence alone got millions of impressions. Leading researchers across every major lab have been circling it. The shift toward agents - AI systems that don’t just respond but plan, act, and execute across tools and time - has been visible on the horizon for anyone paying close attention. What’s changed recently isn’t the idea. It’s that the idea is now real enough to build on.

I know that because I’ve been building on it. And what I’ve found is exactly what you’d expect from any genuine platform shift: the technology is simultaneously more capable than most people realize and more jagged than any demo lets on. The path forward is through contact with it - not observation of it.

Going Deep Before You Can Explain Why

When I decided to explore agentic AI seriously, I had a head full of ideas about what I’d want an agent to actually do. Not abstract capabilities - specific things. Triage my morning. Run a research thread in the background. Help me plan the twins’ birthday without me having to hold the whole thing in my head. Build me things I could actually use.

OpenClaw - an open source framework for building autonomous personal agents - promised to make this accessible. I want to be honest about how that went: it was not easy to set up. The installation was cumbersome, the edges were jagged, and things broke in ways that were genuinely hard to diagnose. I got through it largely by screenshotting error codes and throwing them into Gemini - asking it to help me debug the setup process, step by step. That itself was a lesson in what building at the frontier actually looks like: not elegant, not clean, but tractable if you’re willing to stay close to the problem.

My instance is named Lulubot. It runs on a dedicated Mac Mini, credential-isolated from my own accounts, with its own Gmail, GitHub, X handle, and Solana wallet (the latter 2 of which I don’t actually have it use). It’s not an extension of me - it’s a separate entity I collaborate with. Over the weeks I’ve been building and living with it, the experience has been genuinely revealing in ways I couldn’t have gotten from reading about it.

That’s the whole point. I’m not telling you about Lulubot because it’s a finished success. I’m telling you because the process of building it has already changed how I think about what products are, what agents are, and where the real hard problems lie. The model is not the bottleneck (even if it does still have room to improve). The glue is - the integrations, the identity, the trust. That’s a product insight, not a research insight, and you can only get it by building.

Lulubot shows up throughout Part 2 of this book as a live example of what it looks like to explore a technology while the thesis is still forming. I started because I had a head full of ideas and a framework that promised to let me test them. The articulation - what this actually means for how products are built - is still developing. That’s not a disclaimer. That’s the honest state of building at the frontier.

One Thing Before We Start

There is no definitive truth in here.

I’m going to give you strong opinions. I’ll give you frameworks I’ve built and tested on real products, with real stakes. I’ll be specific about what worked and why I think it worked. But these are tools, not rules. The moment you treat a framework as a law, it stops serving you.

I’ve seen this happen to smart people more times than I can count. They take something that works in one context, apply it rigidly in a different one, and can’t figure out why it breaks - because they stopped thinking about the underlying logic and started following the surface pattern.

Read everything in here as: here’s what I’ve seen, here’s how I think about it, here’s how you might apply it. Then adapt. The adaptation is half the work.

How to Use This Book

The Builder’s Compass is an operating system, not a map.

A map assumes you know where you’re going. An operating system gives you tools for navigating whatever comes next. The four parts follow a logic: first, the mindset shift. Then the specific tools for building. Then the honest stories from the front lines of Google Labs - including the dead ends and shutdowns, not just the wins. Then the question of the team - how I hire for one, what I look for in product builders, and the interview prep, questions, and characteristics that go into finding elite AI PMs. Whether you’re building a team or trying to get hired onto one, Part 4 is for you.

Woven throughout: two projects I’m running live alongside this book. The Meta-Project - an AI idea generator - starts in Part 2 and pays off in the Epilogue. Lulubot, my OpenClaw instance, runs through Part 2 as the living proof of what I’m writing about.

Neither is here because it’s a success. Both are here because the process of building them has taught me more than any outcome could. That’s the bet I’m making with this whole book.

One last thing.

I started this Prologue by saying I don’t make predictions. That’s not quite right. What I’ve stopped doing is treating prediction as the goal. The goal is to be in motion - building, learning, adapting - so that when the wave hits, you’re already in the water.

If you’re here because you want to be in the water: this book is for you.

Let’s start building.

“(B)uilding, learning, adapting - so that when the wave hits, you’re already in the water” - thank you for articulating in such a clear voice my own philosophy. Very much looking forward to the next chapter.