The PRD I Was Proud Of (And Why I'd Never Write It Today)

Chapter 1 of The Builder's Compass - how AI makes us rethink the old playbook

During my first Office Hours a few weeks ago, someone asked a question that stuck with me: “What are the traditional processes or workflows that are highest priority to unlearn right now?”

I started answering and found myself telling a story I hadn’t told in a while - about a feature I built years ago on Google Assistant, the weeks of specs it required, and an engineering manager who, when we caught up years later, still reflected on it as one of the highlights of his early career. And as I was talking through it, I realized how strongly I felt about the answer. The process we used back then was genuinely excellent work. It was also exactly the wrong way to build anything today.

That’s what Chapter 1 is about. Not a “PRDs are dead” hot take - something more specific. The economic conditions that made the PRD rational have fundamentally changed. The cost of building dropped. The cost of being wrong dropped. And when both costs fall at the same time, the case for front-loading everything into a spec before anyone builds anything falls apart.

The chapter also gets into something I’ve been watching on my own teams at Google Labs: the boundaries between PM, UX, and engineering are dissolving in real time. Designers are prototyping features. Engineers are shipping without waiting for specs. PMs are building working demos instead of writing documents. I share what that actually looks like in practice - including how we recently launched a feature that went viral, starting from a prototype rather than a spec.

I land on three shifts that carry through the rest of the book:

Rules → Goals

Define → Demonstrate

Optimize → Adapt

This is Chapter 1 of The Builder’s Compass. If you missed the Prologue, you can start there, but this chapter stands on its own.

For paid subscribers: below the chapter, I’m sharing the exact voice-to-prototype workflow I use to go from a rambling idea to a working app in under an hour. I built the first version of a project live for this post — an AI idea generator I’m calling Spark — and the model confidently suggested I build something called a “Laundry-Salad Oracle.” The prompt, the process, and the absurd result are all yours.

CHAPTER 1

The Death of the Deterministic Playbook

There is a PRD sitting somewhere in my Google Drive that I am genuinely proud of.

I wrote it years ago, when I was building a feature called Multilingual Assistant - a setting that let users choose two languages for their Google Assistant speaker. Simple premise. The assistant would listen, detect which language was being spoken, and respond accordingly. You wouldn’t have to switch anything. It would just know.

What looked simple on the surface was, underneath, a web of interacting states that had to be accounted for before a single line of code was written. The Assistant language setting affected all your devices - including your phone, which had its own system language. What happened if a user picked two languages that didn’t match their phone’s system language? Do you force a match? Surface a notification? How do you account for model limitations - because it turned out the model wasn’t equally good at all language pairs. If you selected a certain primary language, some secondaries were unavailable. And then the impossible state: what if a user already had a valid language pair, then updated their primary to something incompatible with their existing secondary? What does the UI show? What does it do?

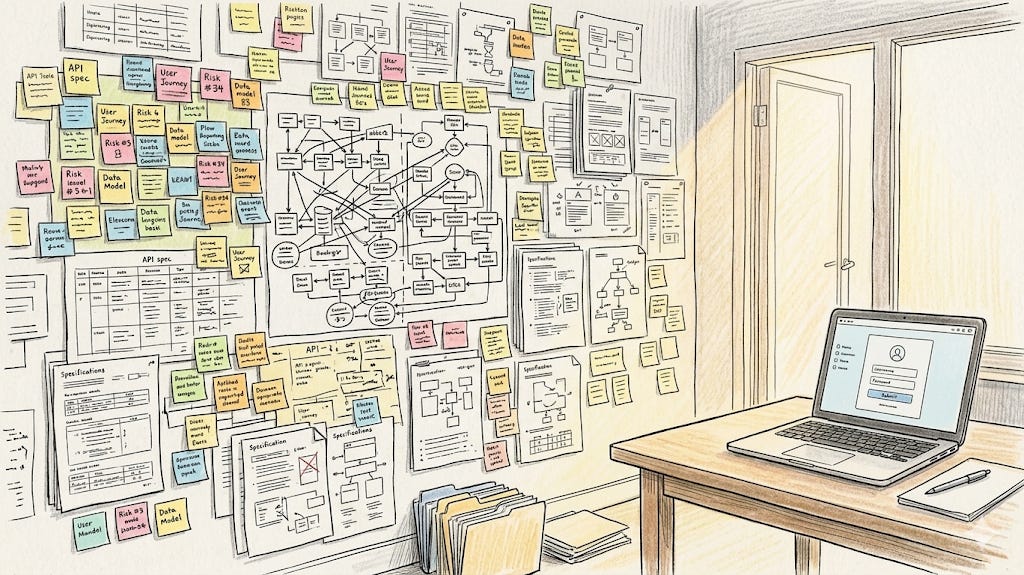

I spent weeks mapping every branch of that logic. I worked with UX to mock up every error state, every banner, every greyed-out dropdown. Every screen that could possibly exist, we designed. Every state transition, we documented.

The engineering manager I worked with on that project brought it up years later, when we’d both long since moved on to different teams and done a lot more in the interim. We caught up, and unprompted, he named it as one of the highlights of his early career - the rhythm we had, the thoroughness of the process, the way the whole thing held together. I remember feeling proud all over again when he said it.

And then I thought about what I would say if a PM walked into my office today and proposed running a product feature that way for the next two months.

I would stop them right there - because there’s a faster, better way to get to the same place, and spending two months in a spec doc before anyone builds anything isn’t it.

The Old Playbook Was Rational

Before I explain why, I want to give the PRD its due. My early career was built on learning how to write them well — and that discipline shaped how I think about products in ways I still draw on. The PRD wasn’t a bureaucratic artifact someone invented to slow things down. It was how serious product people did serious work. The skills it required - thinking through edge cases, anticipating failure modes, forcing clarity before you build - those skills didn’t become worthless. But the artifact itself? It was a rational response to a specific cost structure. And that cost structure is changing.

Engineering time was expensive. Writing code was slow. If your team spent three weeks building something that turned out to be wrong - a state you hadn’t accounted for, a conflict you hadn’t caught - you’d have lost three weeks. In a world where building is expensive, you protect that investment by front-loading all the thinking. You spec everything you can think of before anyone writes a line of code. The PRD is the safety net.

There’s also an important nuance in the Multilingual Assistant story that’s easy to miss. The language detection itself - the part where the assistant listened and figured out which language you were speaking - was nondeterministic. The model did what the model did. We didn’t control that layer. What I was speccing so carefully was the settings layer: the UI states, the valid and invalid language pairs, the conflict resolution logic. That layer was entirely deterministic. Every possible state was knowable in advance. And in a world where building that settings layer took weeks of engineering time, speccing every state before anyone touched the code was the right call.

The PRD was the right tool for that world. I want to say it plainly, because this chapter isn’t an argument for burning down everything that came before. It’s an argument that the world is changing - and the tools have to change with it.

The Cost Structure Shifted. On Both Sides.

Two things happened, and they compound each other.

First: the cost of building dropped dramatically. Models write code now. Agentic coding has compressed what used to take weeks into hours. The expensive, scarce resource the PRD was designed to protect got significantly cheaper. When the cost of building falls, the economics of front-loading all the thinking changes with it.

Second - the cost of being wrong dropped too. Getting something wrong in code before you push to production used to mean rework measured in weeks. Now it means course-correcting in hours. You can revisit decisions that would previously have been locked in for months.

I want to be precise about what I mean here, though. The cost of being wrong in code has dropped. The cost of being wrong with users is a different calculation. Every time we ship something and walk it back, there’s a tax - on trust, on comprehension, on the mental load of people trying to keep up with tools that keep changing underneath them. That tax is real, even if it’s harder to measure than engineering hours.

I’m still forming my full view on what this means for how fast to ship and when to hold back. But for this chapter’s argument about why the rigid PRD no longer makes sense as a starting point, the economics are clear: we don’t need to protect against wrong decisions in code the way we once did. Rewriting is fast. Reconsidering is cheap. The case for front-loading everything into a spec before anyone builds anything gets weaker when the cost it was protecting against has fallen this far.

When both costs drop at the same time, the exhaustive spec stops being disciplined. It starts being overhead.

We’re still working out exactly what this means in practice. But the direction is clear. The conditions that made the PRD rational no longer hold the way they once did.

Still Forming: There’s a concept in software development called the Mythical Man Month — the observation that adding engineers to a late project makes it later, because coordination overhead scales faster than output. I keep thinking about what agentic coding does to that equation. When you’re coordinating agents instead of people, the communication tax looks different. Maybe it disappears. Maybe it just moves somewhere else. I don’t have a clean answer yet — but it feels like something worth watching as these tools mature.

The Boundaries Dissolved

When building was expensive and tools were specialized, it made sense to have distinct roles with distinct domains.

Writing specs took real time - collecting user feedback, synthesizing it, documenting every requirement in enough detail that an engineer could build from it without guessing. That was knowledge work, and it made sense to have someone dedicated to it. PMs wrote the specs because they were closest to the user and the strategy, and because the sheer act of producing a well-written spec was a meaningful part of the job.

Designers created the mocks because visual tools took time to learn - from the early days of tools like Sketch and Photoshop, to more recently Figma, which disrupted everything that came before it by making design more collaborative and accessible. Mastering any of these tools required both studying what good design looked like and putting in real effort and time to learn the software itself. That combination - taste plus tooling fluency - was not easy to develop. And now there’s another disruption forming: design that starts in code. At the end of the day, software designs live in code - and tools like Stitch and Claude are starting to collapse the distance between the design and its final form.

Engineers wrote the code because there was no other option. If you wanted to build anything - even a rough prototype to test an idea - you needed someone who had spent years learning how. The knowledge required to turn a concept into working software wasn’t something you could borrow or fake. That dependency shaped everything: timelines, team structures, how carefully you had to think before you asked anyone to build anything. Now that dependency is gone. Anyone can spin up a working prototype, prove out a concept, or build something functional enough to get real feedback: in tools like Google AI Studio, Claude, or Lovable, in an afternoon. The specific tools will keep changing - they already have, many times over - but the direction won’t: the barrier to building something real keeps falling.

The division of labor was practical. Each role was a gate because each gate was protecting something valuable.

That logic is breaking down across all three. Generative AI made writing cheap. It made prototyping cheap. It made code cheap. What it didn’t make cheap is good judgment - knowing what to build, why it matters, and whether it actually works for the people using it. Every function in the PM-UX-Eng triad is being disrupted.

Here’s what it looks like in practice on my teams right now.

The UX designers I work with haven’t abandoned Figma - but they’ve added something to it. Prototypes are now part of how they think, not just how they hand off. It happened gradually - a few of them leaned in first, then it spread.

One designer came back from maternity leave recently: when she left, everything was Figma files. Within her first couple weeks back, she was already building prototypes to show her thinking. She didn’t need a training program. She came back to a team where the default had shifted, and she adapted.

One designer on the team has been prototyping features she thinks we should build - de-risking product ideas before they ever reach a planning conversation. That’s traditionally PM work. But the prototype is the new currency of conviction, and it doesn’t care about your job title. She can now move an idea from instinct to evidence without waiting for anyone else to build it first.

On the engineering side, something equally interesting is happening. One team has been building out new features without waiting for PM or UX input first. The design system in the codebase is robust enough that engineers can make reasonable implementation guesses, get something to a usable state, then bring in PM and UX for an audit. Any issues get filed as individual bugs and addressed from there.

Two things happen as a result. Engineers move faster. And the design language gets more robust with each iteration - which means the next engineer-led feature comes out a little more polished than the last. It compounds. PM and UX get freed up to race ahead on the bold new ideas, while smaller gap-filling features get handled without the traditional handoff overhead.

In Practice: The mobile responsive version of Pomelli was primarily the work of one engineer on the team — built in her spare time.

On the product side, PMs on my team are now building working prototypes to imagine new features and explore product directions - and I’ve started to notice a few distinct patterns in how and when they reach for them.

The first is for the big swings. Not every feature is equal - some are incremental improvements, others are chunky, meaningful launches that change how users experience the product entirely. For those weightier moments, a prototype does something a spec or a vision doc can’t: it shows that the idea is possible, and it shows how you’re envisioning it. That’s a different kind of stakeholder conversation. You’re not asking people to imagine it. You’re showing them.

The second is for the pivots. When it’s time to rethink a product direction, to reframe what we’re building and why beyond just adding a feature, a prototype communicates a vision faster than words do. When you’re trying to get early conviction on something net new or a meaningful shift in direction, showing is almost always more persuasive than describing. A working prototype gets people bought in before you’ve spent weeks writing a spec nobody will read the same way twice.

The third I’ve seen playing out in hiring conversations. Some of my favorite candidate conversations recently have ended with a live demo - them walking me through something they built, showing me how they think, letting the prototype speak for itself. It’s become one of the clearest signals I look for.

In Practice: The Pomelli PM started the recent Photoshoot feature - which went viral when we launched it - with a prototype, not a spec. A working thing that showed the idea, not a document that described it.

What replaced the old role boundaries is something harder to manage and more effective when it works: a team where everyone has enough taste and agency to move ideas forward, and where the best call wins regardless of who made it.

That requires a different kind of leadership. Less gatekeeping, more taste-setting - getting everyone calibrated enough that good judgment is distributed rather than concentrated. Pick your battles. Know which decisions need a strong opinion and which ones the team should own. The goal isn’t a team waiting for permission. It’s a team that knows how to act.

Same Problem, Different Approach

Let me run the Multilingual Assistant through my current modern workflow. Same feature. Same complexity. Different starting point.

First, I would open a voice note and brain dump the constraints. “Okay, here’s the problem. We have a phone language, a speaker language, and a model limitation on certain language pairs. Here are the conflict scenarios I can think of.” Five minutes of talking, unstructured, not edited for clarity.

I’d hand that to Gemini and ask it to generate a matrix of all valid and invalid states. The thing I spent days constructing manually? Now a starting point I can critique, not a document I have to build from scratch.

Then I’d go into AI Studio and vibe-code a functional settings page that actually implements that logic. Not a description of the error state. Not a mock. A working thing - something you can click through, something that behaves. I’d hand that to the engineer and say: here’s what I’m thinking, here’s how it should behave, come find me if you hit something this doesn’t account for and we’ll work through it together.

Old way: documentation to prevent failure. New way: prototyping to demonstrate success.

The engineering manager who brought it up years later as a highlight of his early career - I understand why. It was excellent work. But the goal was never the PRD. The goal was building something that worked well for users. We had to write the PRD to get there. Now we don’t.

There’s another thing worth saying about that old feature. The language detection model wasn’t static. It got better over time. Some of the edge cases I specced so carefully - the ones I lost sleep over - probably resolved themselves as the model improved. I was writing a safety net against problems that the model was quietly solving while I was documenting them. In the old world, that would have been a painful realization - because a better model meant the settings logic needed to change, and that logic had taken weeks to spec and weeks to build. Revisiting it felt daunting. Now? You update the logic in a fraction of the time. The model gets better. The settings catch up. The user gets a better experience. The whole thing moves forward instead of locking you in place.

Which leads to something I think about constantly when building now: try to build products that get better as the model gets better. Design for improvement, not just for the current state. If you can do that, if you can put yourself in a position where every model update is a tailwind rather than a disruption, you have built something with a structural advantage. You’re using the pace of change to propel you forward.

The New Landscape Is Still Forming

I want to be honest about one thing: the replacement for the PRD hasn’t fully settled yet.

Different teams are running different experiments. Some are deliberately under-speccing - leaving gaps in the brief on purpose to see what the model does to fill them. Sometimes it surprises you, in good ways. A behavior you hadn’t thought to specify turns out to be exactly right. The model’s interpretation of the goal is better than what you would have prescribed.

Others are working with a product.md - a canonical document the model can actually read, a source of truth that lives alongside the codebase rather than preceding it. Others are writing living specs, documents that evolve with the product rather than front-running it.

I’ve been drawn to under-speccing in early ideation. Purposely not closing every door, and seeing what comes through the ones I left open. It’s uncomfortable if you’re used to having everything mapped. It’s also produced ideas I wouldn’t have found by mapping everything.

I don’t know which of these approaches will win out. I’m not sure anyone does. What I’m confident about is the direction: the old way was built for a world that doesn’t exist anymore. New approaches are emerging, and the builders who will figure out what works are the ones experimenting with them right now - not just reading about them.

Three Shifts Worth Naming

Everything I’ve described in this chapter comes down to three underlying shifts. They’re worth naming explicitly because they carry through everything in Part 1.

Rules → Goals. The old way was to enumerate the rules the product must follow. Every state, every branch, every edge case. The new way is to define the goal - what does success look like, what outcome are we driving toward - and let the model navigate to it. The Multilingual Assistant is a clean example: I was writing rules for a settings layer that sat on top of a model that didn’t operate by rules. The model had a goal. I was treating it like a decision tree.

Define → Demonstrate. The old artifact was a document that described what to build. The new artifact is a working thing that shows it. This is more than a format change. It’s a different theory of how alignment happens. A prototype communicates things a spec can’t. It shows behavior, not intention. It lets people react to the real thing, not their interpretation of a description. And in a world where entirely new kinds of products and features are becoming possible for the first time, a prototype does something else: it proves feasibility. For people who haven’t been building with AI, it can be genuinely unclear whether an idea is even possible. A working prototype answers that question before anyone has to debate it - and once people can see that something works, they start thinking about how to build on it.

Optimize → Adapt. The old posture was optimization: you had a known system, known inputs, known desired outputs, and you tuned toward the best version of that fixed thing. The new posture is adaptation: the model changes, the capabilities shift, what’s possible next month is different from what’s possible now. Instead of tuning a fixed target, you’re building something that can course-correct as the ground moves underneath it. Build products that get better as the model gets better. Put the tailwind at your back.

The old playbook died because the world it was built for changed. The question now is what replaces it. That’s the next chapter.

But first, I want to show you the workflow I described above in action. In the paid section below, I’m building the first version of a project live alongside this book: an AI idea generator called Spark. You’ll see the exact prompt I use to turn a rambling voice memo into a buildable spec, the prototype it generated in under an hour, and what happened when the model confidently suggested I build something called a “Laundry-Salad Oracle.” The whole workflow is yours to steal.